I have a lot of wonderful interactions on social media. Some less wonderful.

But no matter what you think of me “personally” my goal in this field is simple: make it better.

My definition of better is: closer to the truth.

I am not a “scientist” by trade but I have a deep reverence for science. And there's one simple rule in science with which no one could argue:

An authority's opinion is not better than an amateur's facts.

This is a rough paraphrasing of an actual quote by Richard Feynman, which you should watch here, it's only a minute.

Now, the people I argue with often cite non-authorities in the context of “you think you know more than them?” (Yes) and they don't know my credentials, but the point is: it's irrelevant. If what I say isn't persuasive, doesn't make sense, or they simply choose not to believe it, bragging about the number of journals I've published in and/or degrees I have should not change their mind.

And if it does, they need to get out of debating of science or statistics forever.

If science were a dick-measuring contest, we'd lose a lot of talented women.

In science, as far as people who do science is concerned, credentials do not matter. At all. Science isn't built on degrees, science is built on knowledge. If someone with fewer degrees has better knowledge, their knowledge is better. Show your work, and that's it, congrats.

This is not to say all opinions are “equal.” If two people make competing arguments, and you are unqualified or incapable of understanding the differences or why they disagree, it is almost always the most reasonable case to side with the person who has the credentials.

But therein, in my opinion, is the difference (and why I can make an impact in this field).

“If you are unqualified or incapable of understanding the differences or why they disagree”

Poll statistics are not easy, but they are certainly not hard, either. Most anyone with a passive interest in them can understand them. The most basic of poll statistics are somewhere between middle school science and intro to algebra. Seriously.

And those statistics are often the ones misunderstood by many people - up to and including literal experts - such that the public has no chance.

The public doesn't understand the data, they (reasonably) rely on the experts…but the experts spread misinformation.

Here's what that misinformation looks like:

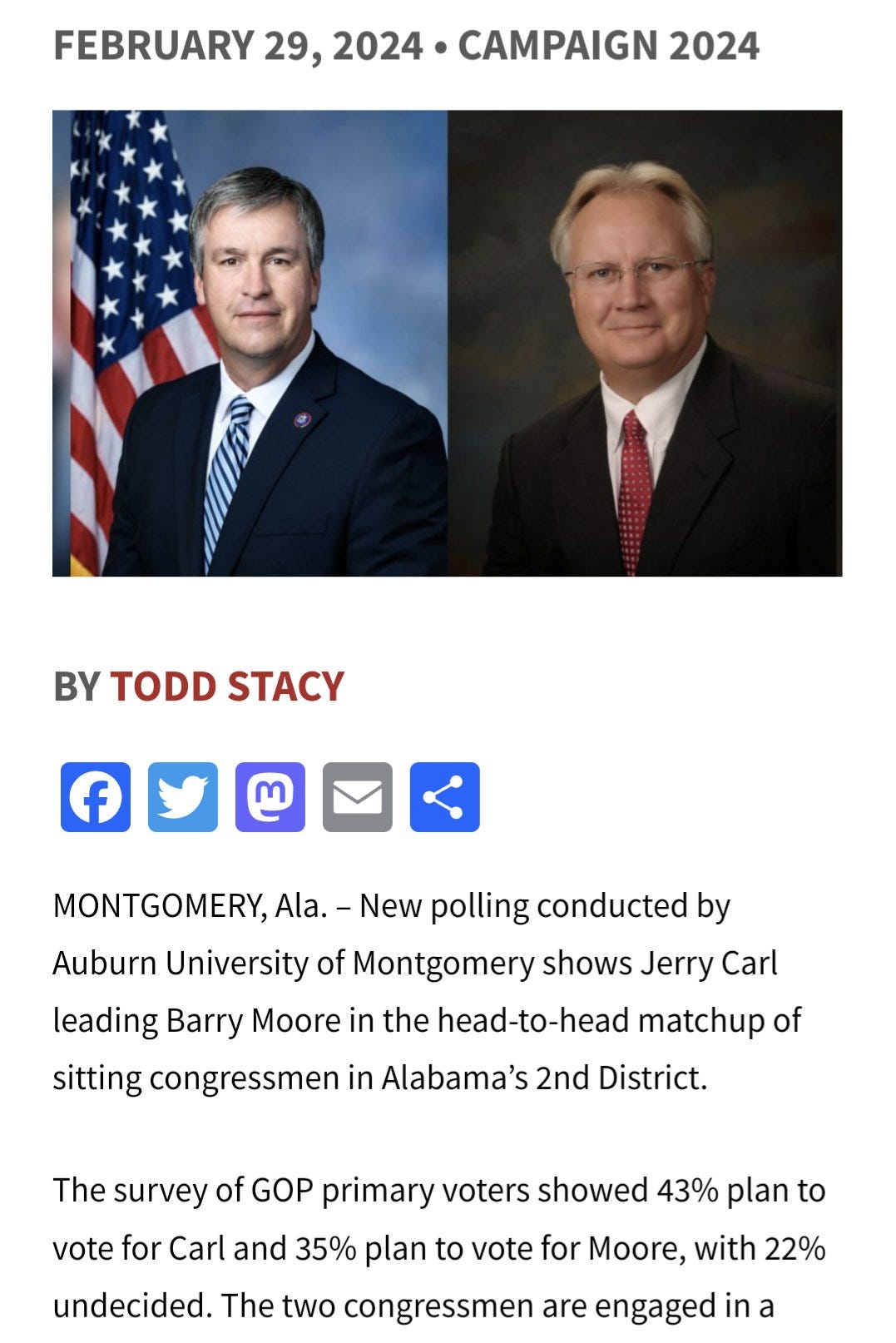

Candidate A: 43%

Candidate B: 35%

Undecided: 22%

Margin of error: +/-3%

US* Analyst: Candidate A up by 8 🧐 him’s a favorite 🧐 poll says he should win by 8 🧐

(If my tone comes across as demeaning, good, deservedly so)

This analysis assumes that undecideds (in this case, 22% of them) must eventually split evenly - yielding a final result of 54-46, or the poll was wrong.

As for what this poll actually says:

If we took a census of this constituency (district, state, whatever) right now, asked them the exact same question with the same options**, then the results should produce, within the stated margin of error and confidence level, the following results:

Candidate A: 43%

Candidate B: 35%

Undecided: 22%

Period. That's it. End of poll analysis. This is what the poll TRIES to tell us.

**(For those interested, I have coined the term simultaneous census to explain what the “true value” is, as intended to be measured by a poll. It is not, as commonly misunderstood by people up to and including experts, the eventual result. Coming soon to a stats class near you).

Lazy analysts, to put it as nicely as I can, have inserted their own assumptions about what undecideds “should” do, and then - assuming their assumptions can't be wrong - say that any discrepancy must be a “poll error.”

Paradoxically, and the reason I am intentionally derisive opposed to indirectly so, is that when presented with nearly irrefutable evidence that their assertions were wrong (see the part about “when did you decide”) they do not modify or update their calculations for how wrong “the polls” were. They lie to the public instead.

And that's not cool. It's not good science, either.

If you are reading this - if you can read and do basic math - you can understand the differences in my approach vs others - and why they disagree.

And you are plenty qualified to judge for yourself who's right. And I'm not wrong.

And (updating after the election) the not-so-hypothetical 43-35 with 22% undecided poll was taken in an Alabama House race:

And then the results:

Moore, polling at 35% (and behind by 8% among decided voters) eventually won.

FiveThirtyEight, professional statisticians, and indeed the entirety of experts within this field would say this poll was “off” by over 11 points. That it had an “11 point” error.

Enormous failure.

In reality, the FIRST STEP in analyzing a poll is not to assume your assumptions can't be wrong…but to account for things that can be known.

Namely, how did undecideds decide?

Simple arithmetic would show that if 16% of the 22% “undecided” in the poll went for the person “trailing,” then there was no error.

Even if, say, only 14% of the 22% did that would put Moore at 49% and Carl at 51%.

Still an error, sure, but this 5.5-point error is LITERALLY HALF of the error experts would assign to this poll. It's not hard to see how this insane poll error calculation that assumes it can't be wrong could contribute more error to the poll than the poll actually had.

*I specify “US analyst” here intentionally. Non-US analysts, believe it or not, do something far worse and something that would be considered scientific malpractice, if not for its “traditional” usage.

I may cover that at a later date.

Found this Substack via Threads! 100% agree with what you're describing here in terms of poll commentary.

It's kind of funny that I wrote about a very similar thing in the wider context of measures - especially the discussions around inflation and the inflation measure.

A measure is just a measure - interpreting what it means beyond that might not be obvious. https://www.nominalnews.com/p/dont-tie-yourself-to-a-number-data-measurement