In 2020 and 2022, I released my forecasts before others (and possibly anyone else) just because those were the major projects I was working on.

This year, you may know, I'm finishing up something else.

I promise my forecast will come soon.

Today, Silver released his forecast - and it's not exactly surprising.

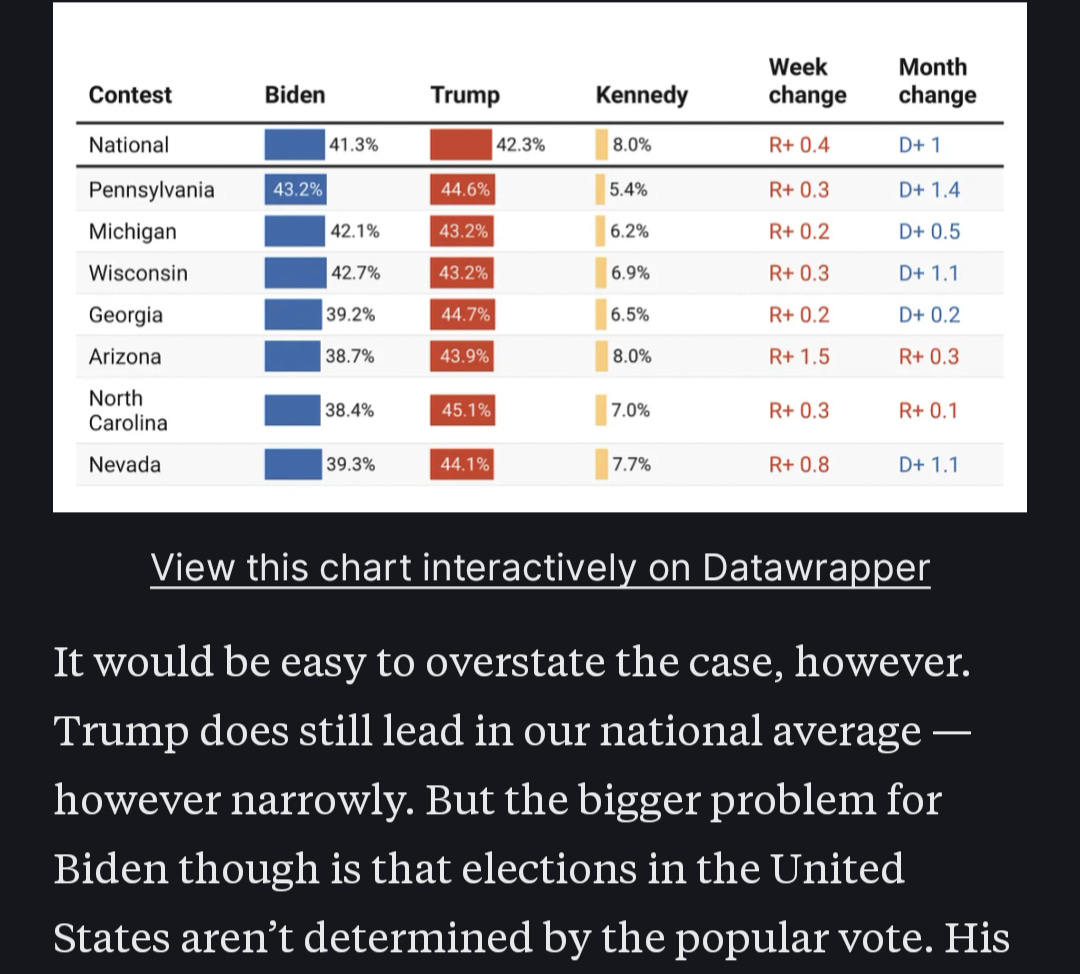

Trump is “ahead” in the polls, therefore - Silver concludes - Trump is favored. This is what passes as objectivity, I guess.

I wrote about this in May, because I knew it was coming, so I'm tapping the sign again.

If you haven't, you should read that article linked above. There are (and, given the nature of the race, probably will be) a non-negligible number of undecided voters leading up to the election.

How we forecast them can easily be the difference between Biden as a 65% favorite or 35% underdog.

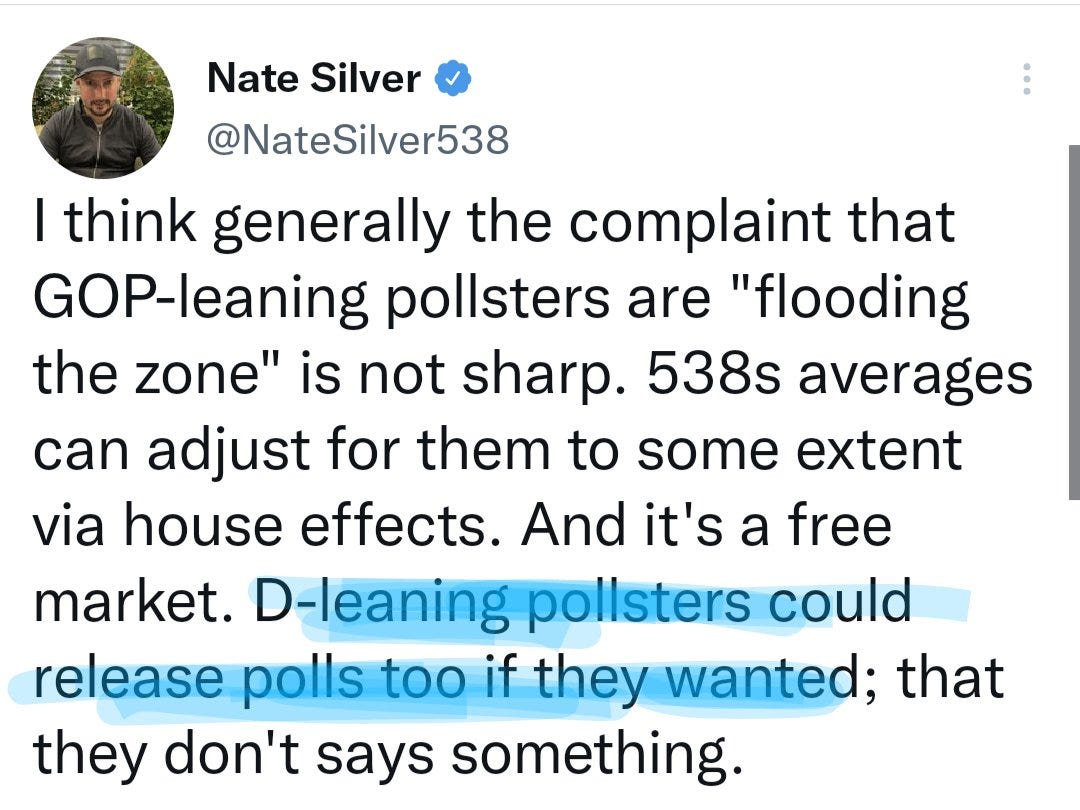

To be fair, Nate did post some good things about forecasting in general (in addition to admitting he *wants* Biden to win, which is refreshing at least)

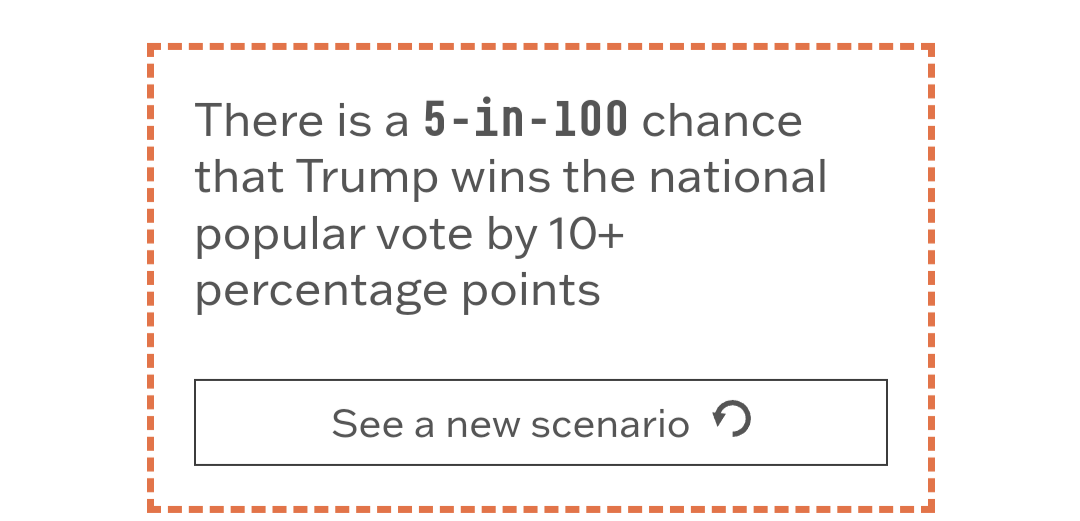

But at the end of the day, the wide tail behavior of the model is still problematic. There are a few examples I found (I didn't pay for the full forecast) but this one is the headliner:

RFK 5% to take a state (or, I guess, district)?

Nah.

There's no amount of uncertainty that gets us there.

And for the record, this “ah, uncertainty…it's only (insert month here) happens every cycle, then October rolls around, nothing changes, but the wild tail behavior remains the same.

Not pertaining to tails, Nate's 22% probability for Biden in Arizona is bad, too - seems to be due to overweighting polls by recency again, including partisan ones.

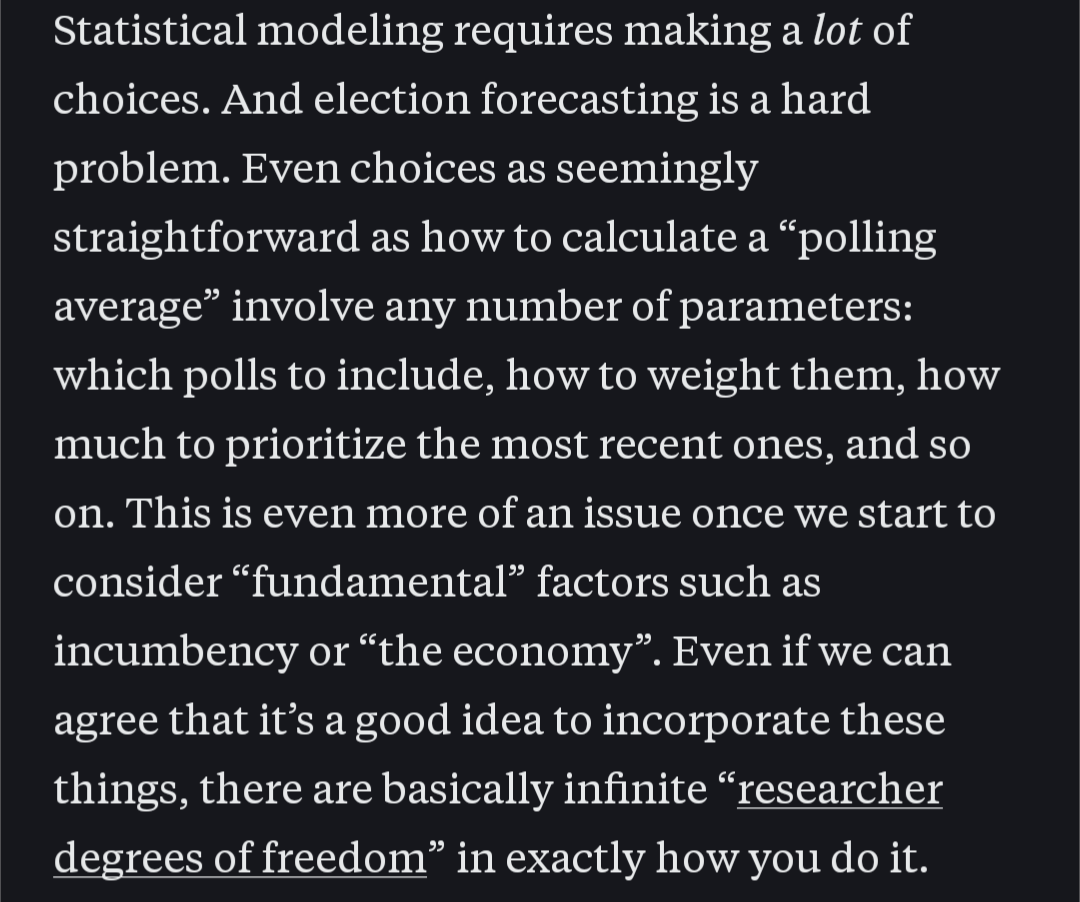

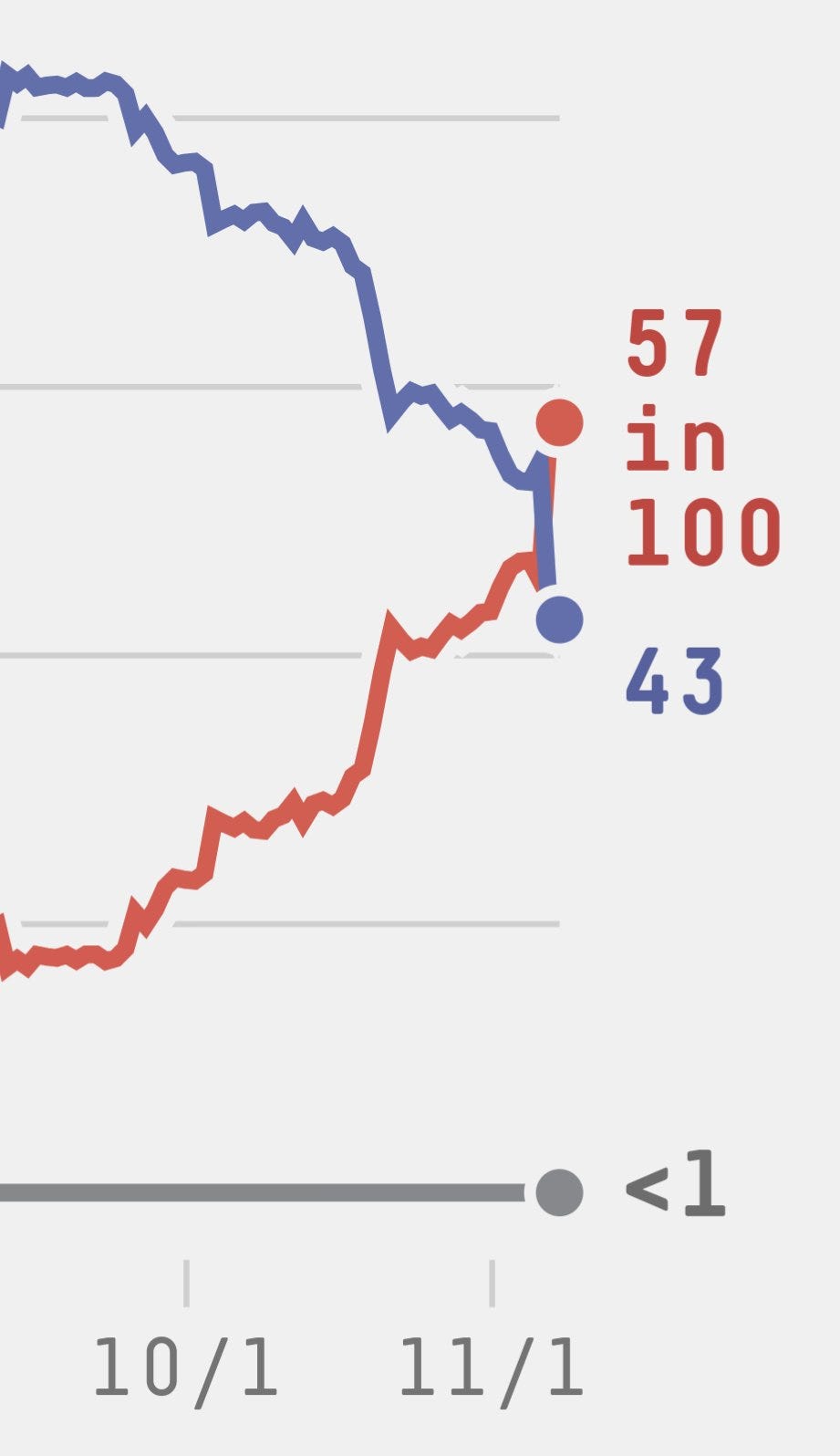

On that note, it was 2022 where I really did some damage forecasting. In 2016 (unpublished) and 2018 and 2020, I was pretty similar to 538. But in 2022 I noticed some problematic trends and called them out in advance - and accounted for them in my forecast.

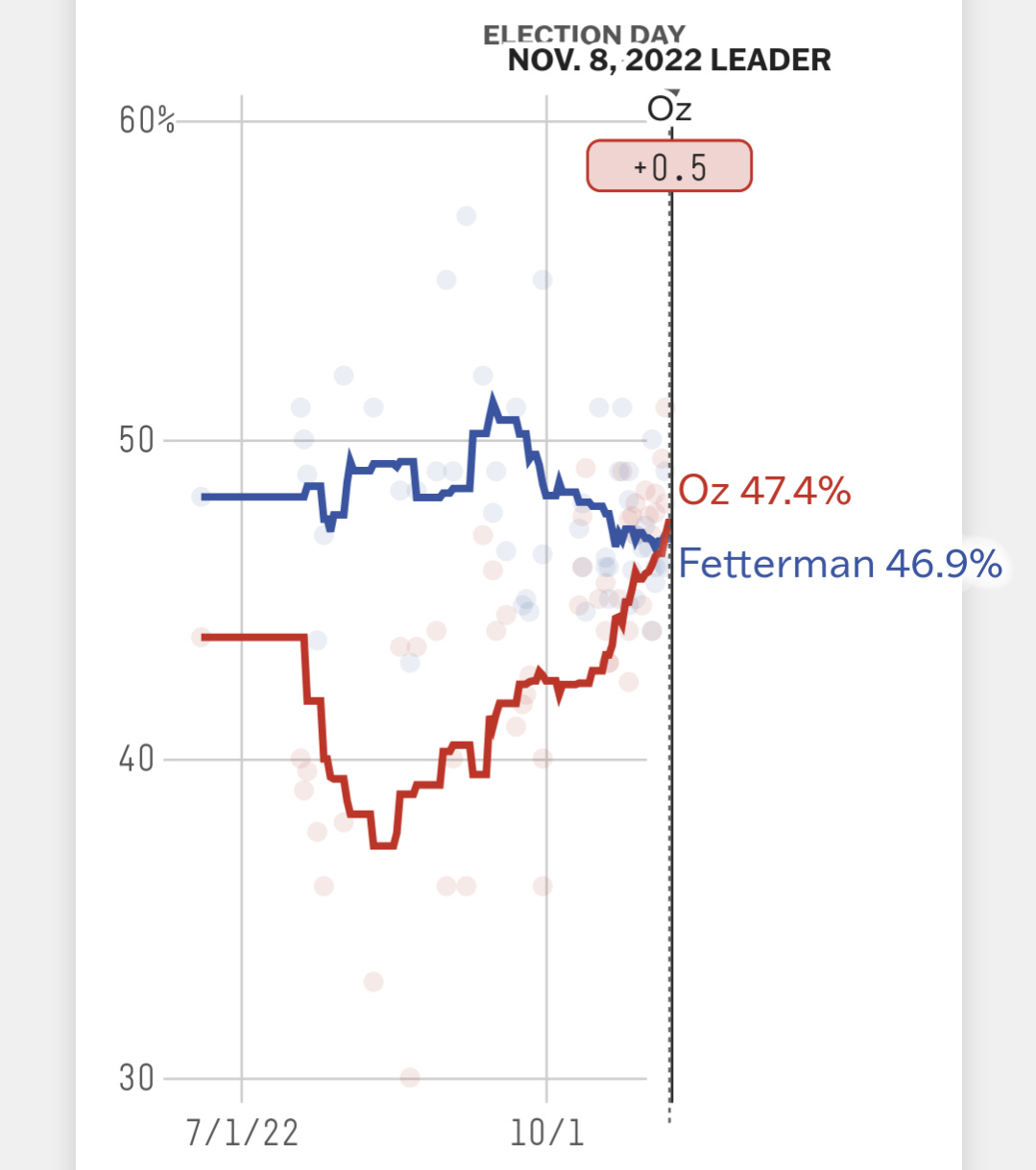

Most blatantly, in Pennsylvania, where 538/Nate's model did this:

Many people have pointed out that Nate’s model takes data from other forecasters, and other forecasters moved their forecast towards Oz so he moved his. Fine.

Herding is a problem, but forecasters can forecast however they want!

But that doesn't explain this:

Their polling average - though it had a fair number of undecided - said Oz was ahead among decided voters.

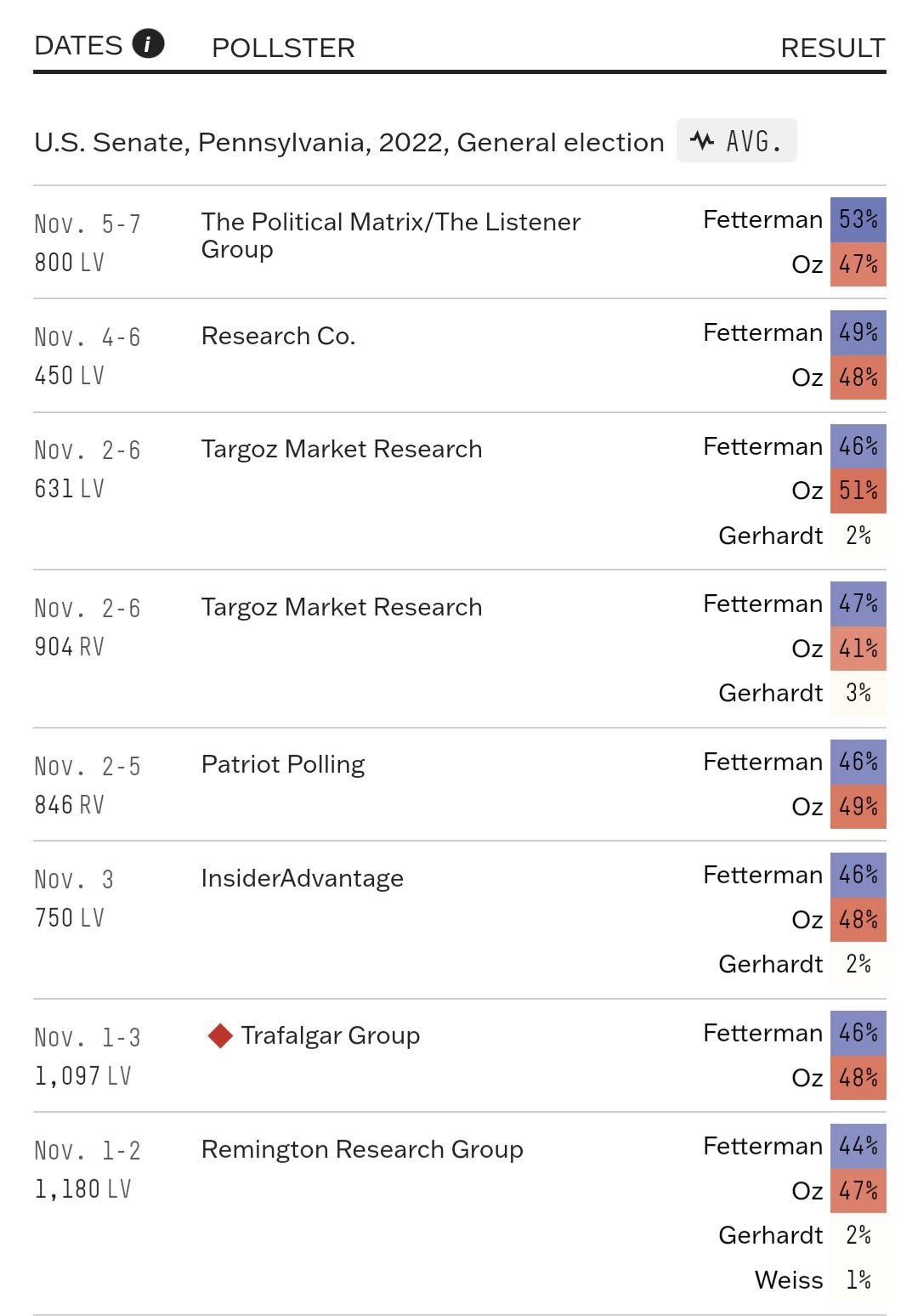

Here are the polls that improved Oz’s standing in their average by 3 points:

If you're confused by how mostly partisan polls, a few newbies, and a couple of polls that are good for Fetterman can improve Oz’s position so much, don't be. This is normal for their model!

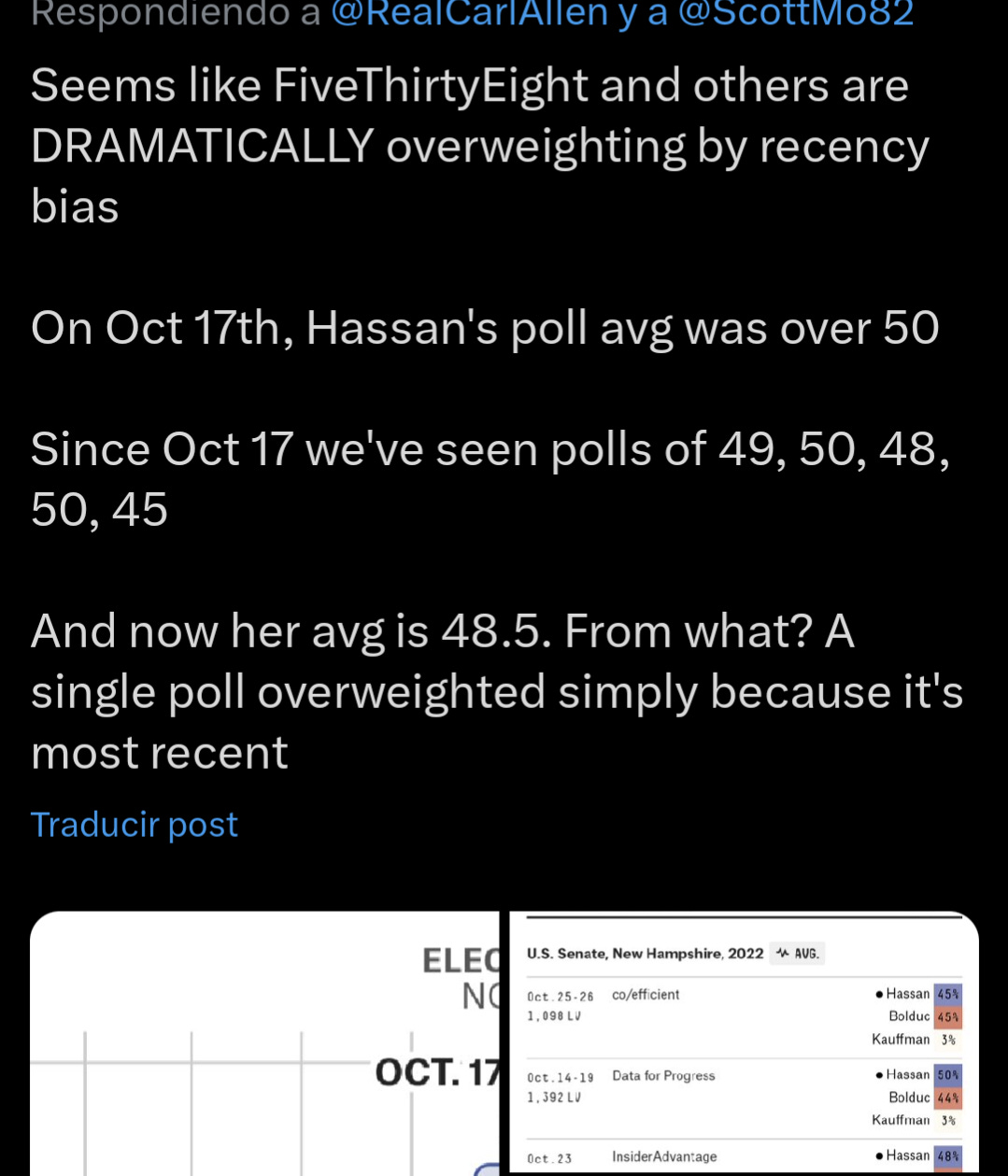

In New Hampshire, where I said Hassan and Dems should be strong favorites, FiveThirtyEight was less bullish (I said 85% Dems won NH, they said 73%).

More concerning than the numbers is the chaotic movement.

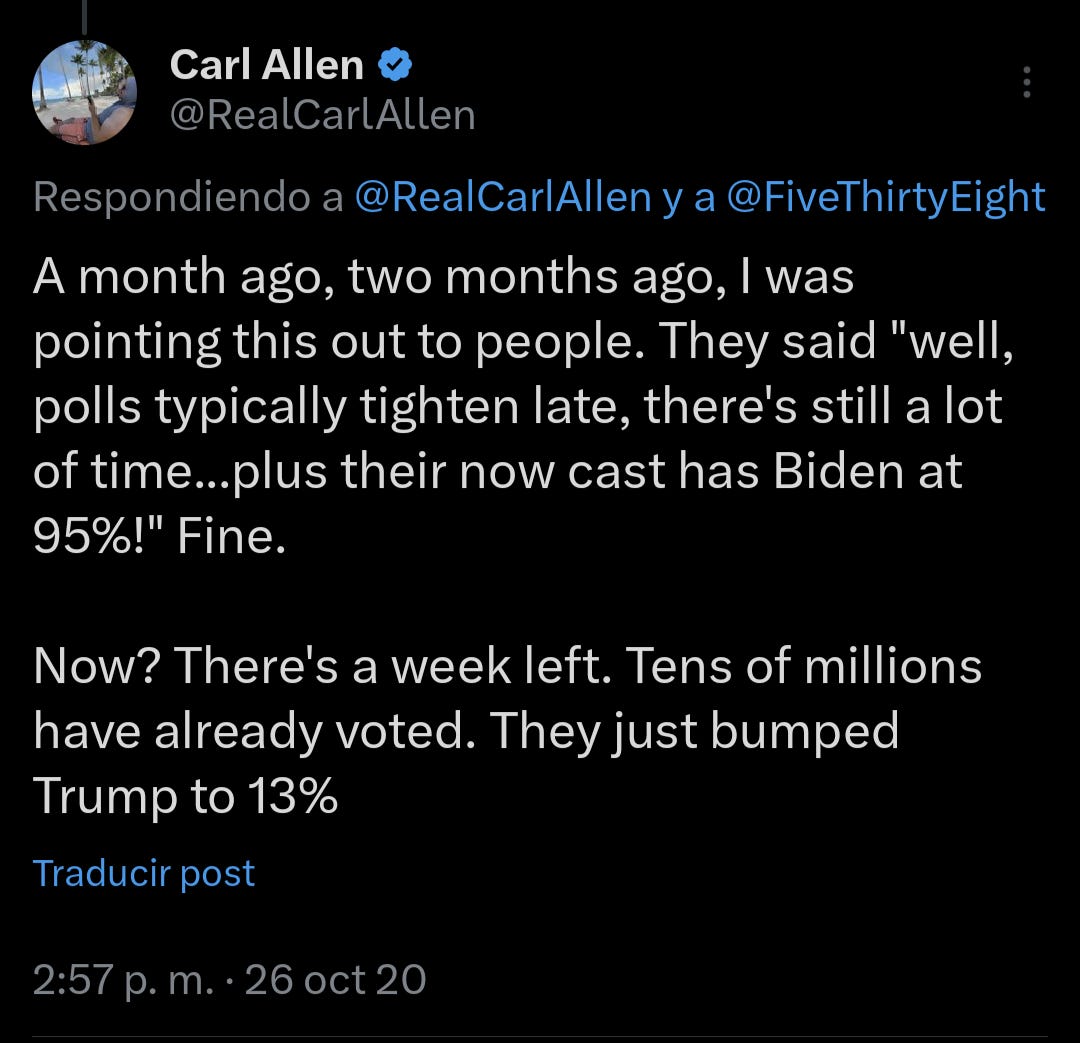

To quote someone who put it well:

There are lots of things that happen (or don't happen) that should impact your forecast. No question. But only very sudden, dramatic (and unforeseen) changes should impact a good forecast dramatically.

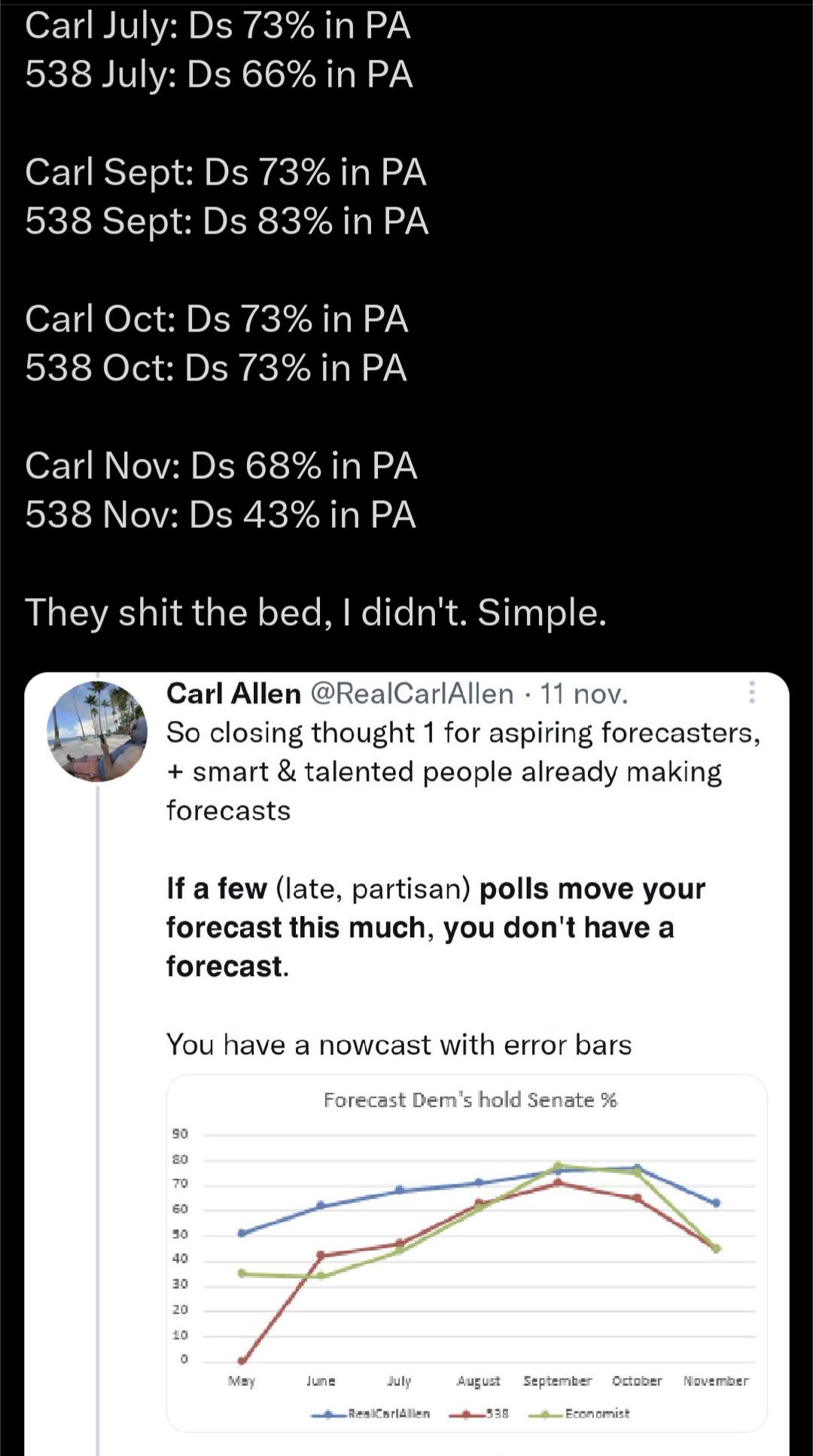

Here's a side-by-side of 538’s PA Senate forecast with mine.

Carl in May: Ds 56% in PA

538 in May: Ds 35% in PA

Carl July: Ds 73% in PA

538 July: Ds 66% in PA

Carl Sept: Ds 73% in PA

538 Sept: Ds 83% in PA

Carl Oct: Ds 73% in PA

538 Oct: Ds 73% in PA

Carl Nov: Ds 68% in PA

538 Nov: Ds 43% in PA

Now, regardless of how highly you think of me, or how highly I think of myself, my forecasts are not a gold standard. They shouldn't even be a bronze standard. But given the absolute inadequacy of quality work done in the field right now, I'm a fair comparison.

From May to September, 538 went from 35% to 83%

That's a big move. But May was pre-Oz, Fetterman's health was a concern, and lots more data came in. 83% is a pretty bold number.

Then, in less than two months, receded to 43%.

Based on what?

Again, plenty of examples of this happening, but it all goes back to the model itself, overweighting by recency and refusing to consider the possibility that partisan polls could be a problem.

It was pretty clear their model did this, but it helped that Nate outright said it.

FiveThirtyEight, with the new guy, not faring much better.

Again we have a 5% probability assigned to an event that should be much lower.

I've considered since I started doing this that I could be wrong.

But with every data point it's appearing more likely that they are.

Biden is favored, right now. Unless several things change in Trump's favor, it will probably remain that way.

Forecasting isn't looking at data and saying “this is what this data means for right now”

It also requires looking at likely events leading up to the election - such as Dems officially nominating Biden and, you know, campaigning.

Republicans have bad candidates on the ballot in North Carolina and Arizona. This will impact the Presidential race.

Oh, and if you think Roevember isn't going to matter, think again.

Dems have an abortion initiative that will help them in Florida (which is extremely likely to pass with 65% I think, only needing 60%) and this issue is not going away nationwide.

It's not as though we can look at 2022 midterms or 2023 special elections and say “but Dems did well therefore will do well in 2024”

But we can make some informed assumptions based on 2020, 2022, and 2023 about who these “undecided” voters are, and if Dems will turn out in solid numbers for a candidate they largely don't like - when they didn't for Clinton in 2016.

It's not to say Biden can't lose. He absolutely could. But it is to say I'd be more surprised by a Biden loss than a Biden blowout.

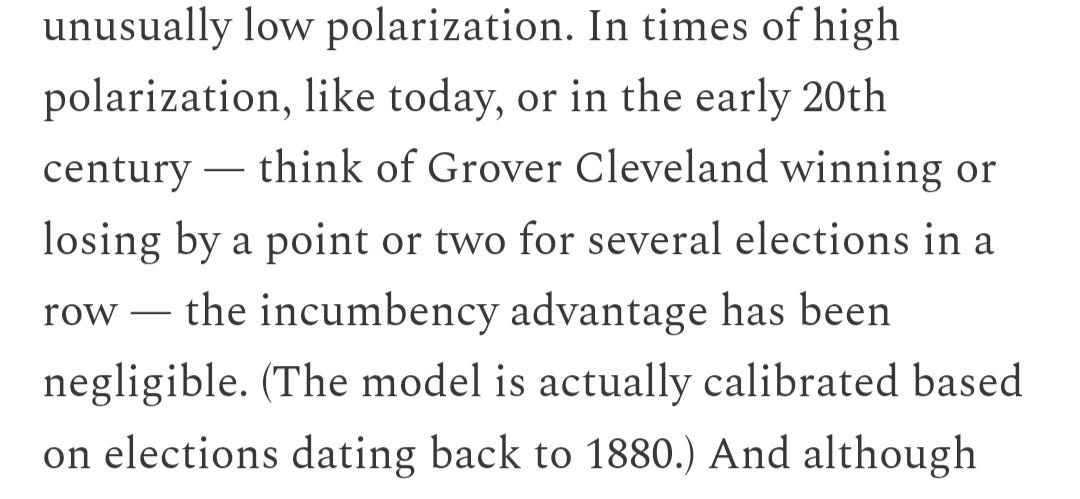

The 2024 election will be historically weird, that is the only guarantee. Fitting your model around Grover Cleveland’s performance as an incumbent (as Nate said his model does) is not something I'd classify as a good practice.

Do you think debates matter? Given the fact that we are now having this discourse with all kinds of people freaking out...